How to extract data from ACORD forms

This blog is a comprehensive overview of different methods of extracting structured text using OCR from ACORD Forms to automate manual data entry.

Insurance is a fairly paperwork intensive sector. There’s no arguing with the number of forms and paperwork that comes in the daily operations of any Insurer. Almost everything has a form or document associated with it, right from applying to claiming the insurance, as per the standardized approaches.

Out of these forms, we all have probably heard of the ACORD forms (Association for Operations Research and Development). These are a variety of forms used across different insurance industries providing a tangible medium for exchanging information and storing them in a manner that is universal to all agencies and their agents. Of course, manually entering the forms and digitizing them later isn’t something anyone enjoys. Hence to maintain these more efficiently, one must look into intelligent algorithms that can read these forms irrespective of the type of form or template and save them in a structured manner. In this blog, we’ll look into different ways of extracting information from the ACORD forms using OCR and Machine Learning techniques. Below are the table of contents.

- What are ACORD forms?

- Information Extraction from ACORD forms using OCR

- Why does normal OCR does not scale?

- An end to end pipeline with deep learning for IE

What are ACORD forms?

Association for Operations Research and Development or ACORD in short, are the insurance industry’s nonprofit standards, meaning they set forth the universal language and documentation that all insurance agencies utilize. They are also a resource for other things like object technology, EDI, XML, and electronic commerce in both the United States and around the world. These forms are now available in a variety of formats, including printable PDF, electronic fillable, and eForms. One core advantage of using ACORD’s standardized forms is that they allow for increased efficiency, accuracy, and speed of information processing. There is a massive list of these forms, we’ve listed a few below with their associated form IDs.

- 25 – Certificate of Liability Insurance

- 27 – Evidence of Property Insurance

- 80 – Homeowner Application

- 90 – Personal Auto Application

- 125 – Commercial Insurance Application

- 126 – Commercial General Liability Section

- 127 – Business Auto Section

- 130 – Workers Compensation Application

- 131 – Umbrella / Excess Section

- 137 – Commercial Auto

- 140 – Property Section

You can check more forms from the link here. In the next section, let’s discuss how we can build information or data extraction algorithms from ACORD forms.

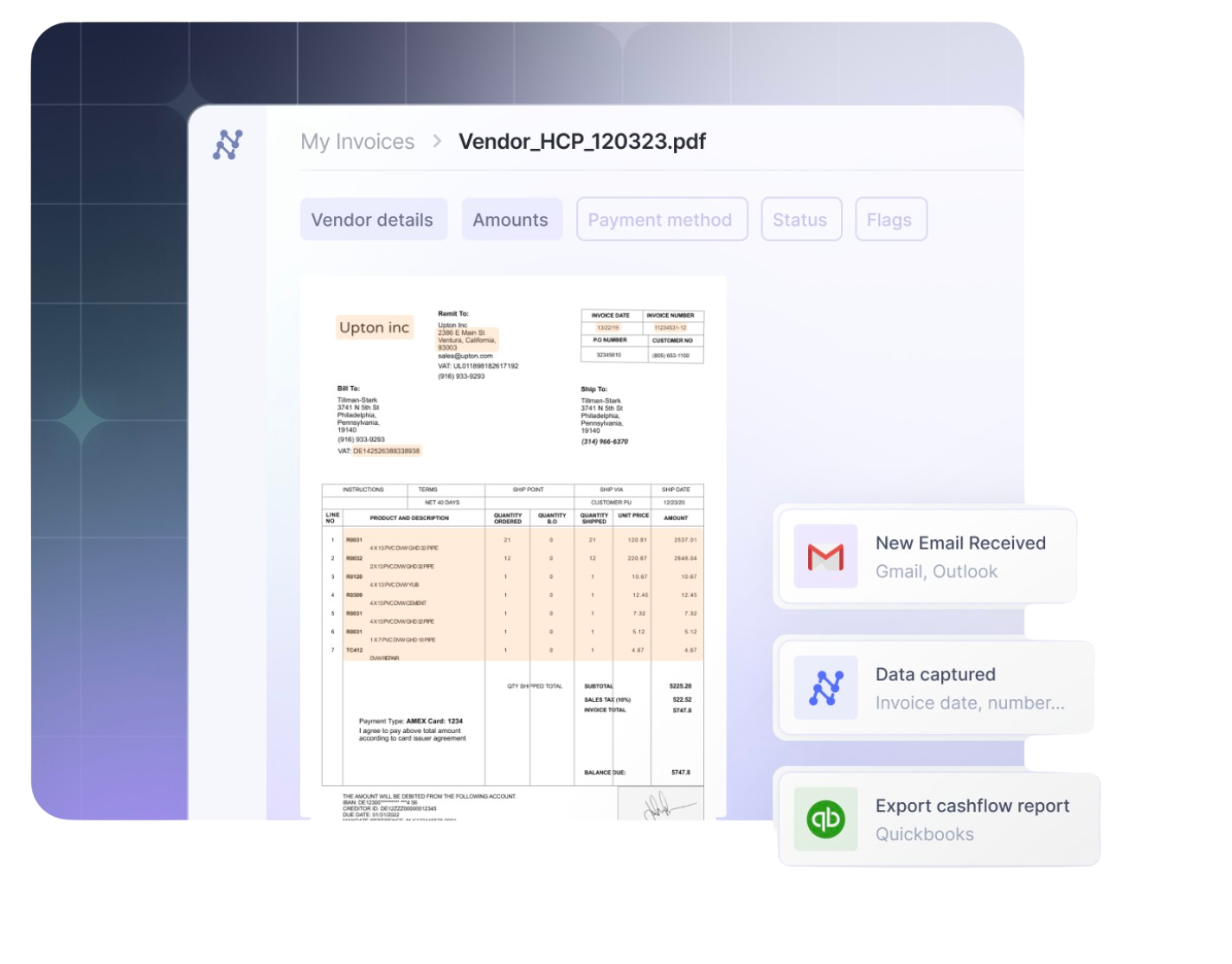

Try Nanonets' AI-based OCR for extracting information from ACORD forms and automating the data entry process!

Information Extraction from ACORD forms using OCR

Several organizations that receive high volumes of ACORD forms continue to process them manually. This requires employees to upload and maintain data into a database, which is typically labour-intensive, expensive, and error-prone. Hence by using an ideal automated system, the insurance organizations can realize a significant ROI through reduced data entry processing costs, while also improving processing speed and accuracy. Now, here comes the question! How can one build an ideal information extraction (IE) tool for scanned forms? To create a state of the art algorithm for IE requires a promising pipeline, but the heart is the Optical Character Recognition (OCR). If you’re not aware of this, think of it as a computer algorithm that can read images of typed or handwritten text into text format.

The OCR techniques are not new, but they have been continuously evolving with time. Out of these, one popular and commonly used OCR engine is Tesseract. It's an open-source python-based software developed by Google. However, even popular tools like Tesseract fail to extract text in some complex scenarios. They blindly extract text from given images without any processing or rules.

In the next section, let’s review why OCR alone cannot scale for information extraction.

Why does normal OCR does not scale?

As discussed, the core job of OCR is to extract all the text from a given document irrespective of template, layout, language and fonts. But our goal is to pick all the critical information like customer name, form type, insurance details from the ACORD forms which aren't handled by the top OCR engines like Tesseract and many others. In this section, we'll discuss all the drawbacks of how and where the OCR fails to extract the text.

Orientation and Scaling

Orientation is one of the common problems while performing OCR. For high-quality scanned and aligned images, the OCR has a high accuracy of producing entirely searchable editable files. However, when a scan is distorted or when the text is blurred, OCR tools might have difficulty reading it, occasionally making inaccurate results. To overcome this, we must be familiar with techniques like image transforms and de-skewing, which help us align the image in a proper position.

No Key-Value Pair Extraction

Key-Value pair extractions has become one of the essential tasks for IE from forms and documents. This allows us to extract only necessary information and save them in any format based on the requirements. For example, consider the Workers Compensation Form. Here, there are specific fields where labels like Client Name, Attorney Name, Phone Numbers, Email IDs, Tracking Number etc., are present. Now, the goal of key-value pair extraction is to identify these labels (Keys) and the values associated with it. We'll also have to make sure that this algorithm is applicable for different templates, as in the same algorithm should be appropriate for documents of other formats. Tesseract doesn’t allow us to do this; we’ll have to integrate special deep learning algorithms again to achieve this task.

No Fraud and Blurry Checks

ACORD forms are often used for different verifications. Hence an ideal OCR based solution should also be able to identify if the given document is original or fake. Below are some of the traits which can help us check if the image is fake or not.

- Identify backgrounds for bent or distorted parts.

- Beware of low-quality images.

- Check for blurred or edited texts.

Limited Languages

Imagine you’ll have to use different OCR tools to extract ACORD forms of different languages. Not optimal right? Even most of the OCR tools, including tesseract, are trained with limited languages and have to configure the language settings manually. Hence, we'll have to make sure to train the OCR that can automatically identify the language and extract information.

Now that we’ve seen all the drawbacks of using normal OCR for ACORD forms, in the next section we’ll discuss how one can build an end to end machine learning-based pipeline for automating ACORD forms.

An end to end pipeline with deep learning for IE

This section gives you a quick overview of how one can build a machine learning-based pipeline for information extraction. However, achieving the state of the art level performance involves a lot of experimentation and expertise. Now let's get started with loading and processing data.

Data Collection and Loading

Behind most of the top automation tools and software, even Tesseract data is the core—the algorithms behind these turn intelligent daily based on the amount of data fed for training. In our use case to build an IE algorithm first, we must have a fair amount of labelled ACORD forms. We'll have to make sure these are all of the same sizes and check if they are correctly scanned and cropped. To make the training process smooth specific image transformation techniques are also applied to the training data like normalisation, skewing etc.

Model Building

Models here are the neural network architectures. If you're new to this field, think of it as an artificial brain through which data is sent, and patterns are learned. There are popular research papers and open source projects on GitHub where we can browse through a few IE models. Now, let's discuss a few popular models that can be used for key-value pair extraction on ACORD forms.

In the research, CUTIE (Learning to Understand Documents with Convolutional Universal Text Information Extractor), Xiaohui Zhao proposed extracting key information from documents, such as receipts or invoices, and preserving the interesting texts to structured data. The heart of this research is the convolutional neural networks, which are applied to texts. Here, the texts are embedded as features with semantic connotations. This model is trained on 4, 484 labelled receipts and has achieved 90.8%, 77.7% average precision on taxi receipts and entertainment receipts, respectively.

BERTgrid is a popular deep learning-based language model for understanding generic documents and performing key-value pair extraction tasks. This model also utilizes convolutional neural networks based on semantic instance segmentation for running the inference. Overall the mean accuracy on selected document header and line items was 65.48%.

In DeepDeSRT, Schreiber et al. presented the end to end system for table understanding in document images. The system contains two subsequent models for table detection and structured data extraction in the recognized tables. It outperformed state-of-the-art methods for table detection and structure recognition by achieving F1-measures of 96.77% and 91.44% for table detection and structure recognition, respectively. Models like these can be used to extract values from tables of pay slips exclusively.

Model Inferencing

After our model is trained with adequate samples of ACORD forms, we'll have to perform the inferencing. It is the stage where a trained model is used to infer/predict the testing samples and comprises a similar forward pass as training to return the values. If the trained model is performing well with high accuracy, we can continue to deploy it. Else we'll have to retrain the model tuning all the hyperparameters.

Model Deployment

Model deployment is one of the challenging tasks, many of the current solutions include serving the trained model as an API, and a few provide on-premises installation with Docker. To do this first, we should be aware of backend python frameworks like Flask and Django. We're restricting Python here as most of the models are trained using Python-based deep learning frameworks like TensorFlow and PyTorch. Hence it'll be easy for us to integrate trained models with the web. Next, we'll have to write our APIs based on the requirements where inferencing is made on requested data and export them in the necessary format. To learn more about the deployment process read our blog here.

Exporting to CSV or Excel

After the models are deployed, it's important to export the data into either a CSV file or an excel sheet. This helps the end-user to upload or store all the records into databases, CRMs or ERP systems. Not just for maintaining, but this can also help in validating the outputs if there are any errors while extracting the data.

Further Reading

- Extracting Data from Financial PDFs

- The Annual Report Algorithm:Retrieval of Financial Statements

and Extraction of Textual Information

Update:

Added more reading material about different approaches in extracting information from financial documents and forms